You Can’t Delegate What You Can’t Define

Why most AI assistants fail (and how to fix it before you build)

Last week, a business owner told me their “AI assistant” wasn’t working.

“It keeps giving me answers I have to completely rewrite,” they said. “I thought this was supposed to save time?”

I asked one question:

“What’s the first step in your decision-making?”

Silence.

Then: “Well… it depends.”

And there’s the issue.

The truth

You can’t delegate what you can’t define.

If you can’t explain your criteria in clear terms, an AI assistant can’t execute it—because it has nothing real to follow. It’ll guess. And you’ll edit. Forever.

Why most “AI staff” fail

Everyone’s rushing to build AI agents, custom GPTs, and digital workers.

The promise is seductive:

“Let AI handle your emails.”

“Automate follow-ups.”

“Build an AI team that works 24/7.”

But here’s what happens in real life:

Scenario 1: The Email Assistant

It drafts something generic.

You spend 10 minutes fixing it.

You think: “I could’ve just written it.”

Why it failed: you never defined what makes your follow-ups effective. The AI is guessing your standards.

Scenario 2: The Client Fit Decision

You ask: “Should I take this client?”

It gives a thoughtful answer… that doesn’t match how you evaluate people.

Why it failed: you never mapped your qualification criteria. AI used generic business logic, not your business logic.

The pattern is simple:

Most AI “employees” fail because the judgment was never documented.

What documenting judgment actually is

This isn’t “write SOPs for everything.”

It’s defining decision rules so clearly that someone else—human or AI—could execute them consistently.

Email communication (example):

A strong follow-up email must:

reference a specific detail from the last conversation

add new value (resource, insight, update)

include one clear next step

avoid filler like “just checking in”

match the relationship stage (warm ≠ cold)

Now AI can draft your email, not a generic email.

Client qualification (example):

I take clients who:

meet a minimum budget of $X+

want process work (not quick fixes)

implement, not just consume advice

respond within 48 hours

And I avoid clients who signal urgency chaos (“I need this yesterday”)

Now AI can help you qualify instead of hallucinating.

The framework: document it, then delegate it

Pick one decision you make repeatedly (the one that drains time).

Review your last 5 instances: what happened, what you chose, why.

Extract your rules in simple if/then language.

Test it on a new scenario and refine until it matches reality.

Only then delegate it—to a person, an SOP, or AI.

This is the “process-first” part most people skip. (This is exactly where I start: Listen → Review → Architect.)

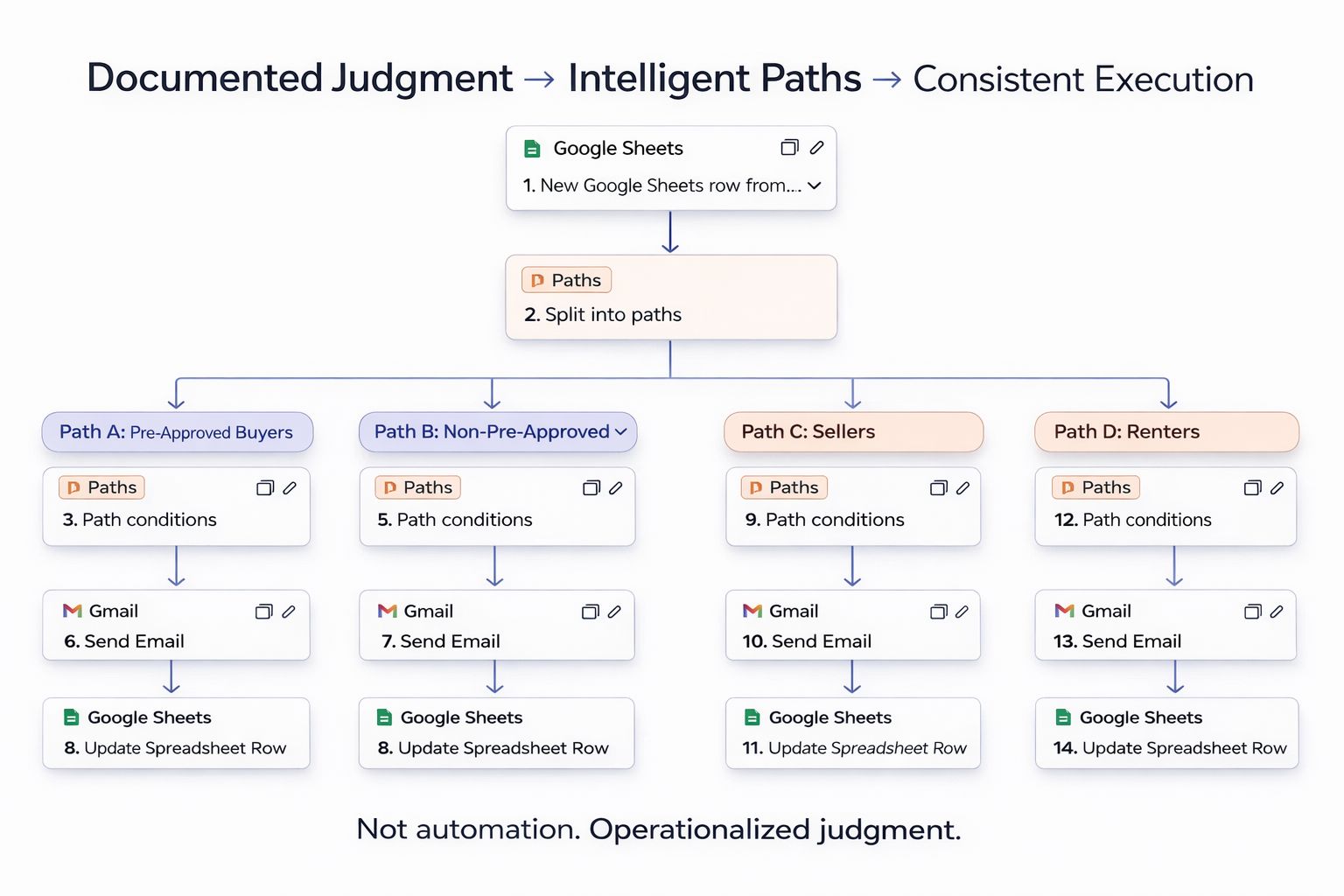

Real example: 12–15 hours/week recovered

I worked with a real estate professional getting buried in follow-up emails—20–30 conversations moving at once.

Their AI assistant wasn’t the solution. It was the symptom.

They had never defined:

what “high intent” looked like

what value to send at each stage

what their voice was (and what phrases were forbidden)

when to stop following up

We spent 90 minutes documenting their rules.

Then we built the AI assistant using THAT documentation

This is what it looks like!

Result:

drafts matched their voice

edits dropped to 1–2 minutes

fewer missed leads

better responses (people noticed)

time saved: 12–15 hours/week

payback: ~2 weeks

Difference wasn’t the model.

It was documented judgment.

Your next move

Don’t ask: “What AI tools should I use?”

Ask: “What decision do I make that I can’t clearly explain?”

That’s your bottleneck.

Pick one decision. Spend 30 minutes documenting your rules this week.

If you want, reply with the decision you’re stuck on and I’ll send back a simple criteria checklist you can use to document it fast.

— Jay

Founder, Clarity2Scale Consulting

Process-First AI Strategist